The authors are, respectively, senior product manager and evangelist at ALC NetworX, and business development manager, OEM & Partnerships, at Ross Video.

Remote production is clearly a hot topic today, as companies around the world race to maintain their existing workflows with their talent dispersed in many off-site locations. Such production is the new normal and — now that we have experienced its potential — is likely to continue to be a focus in the future.

While many solutions have been hastily cobbled together, there is a need for higher-quality productions with lower latency that integrate easily with existing equipment.

Starting Point

We started with a basic question: Can we send Ravenna/AES67 traffic over the public infrastructure and over long distances?

Then we wondered, would we be able to listen to something resembling audio? Would it be good quality?

It is one thing for a single company with their own equipment to do it, but could we also interoperate with equipment from other companies? After all, this is the whole point behind Ravenna and AES67.

Finally, we also wondered how we would do it and what challenges would we face.

Before digging into the setup and challenges that needed to be overcome, it is important to understand that Ravenna and AES67, even though they use IP, are designed to be used in local-area networks (LANs).

Despite this, Ravenna and AES67 have been proven and are being used commercially in wide-area network applications across private networks, even though their use in WANs was never contemplated by the standards.

Private dedicated networks, whether owned or leased, are well-architected, have predictable behavior and come with performance guarantees.

Public networks, on the other hand, are the equivalent of the “wild west.” You can’t control them. They are congested and unpredictable. Public networks suffer from packet loss due to link failures and have large, sometimes dramatic, latency due to packet re-transmissions.

This makes the public environment hostile for Ravenna and AES67!

Challenges

There are three main challenges: latency and packet jitter; packet loss; timing and synchronization.

Fortunately, the increased latency and packet jitter of the public network is handled by Ravenna by design, through the use of large receiver buffers that must be able to handle a minimum of 20 mS. AES67 only requires 3 mS but also recommends 20 mS.

Most well-designed Ravenna solutions, like all the equipment used in this experiment, have even bigger buffers and other associated techniques that can compensate for the added delay.

The AES Standard Committee working group SC-02-12-M is working on guidelines for AES67 over WAN applications, and a key recommendation is to increase the buffer size within devices.

Packet loss is another important challenge. Ravenna and AES67 are not designed to cope with dropped packets.

Fortunately, there are other transport protocols that are architected to deal with dropped packets without introducing a lot of extra latency. These include Secure Reliable Transport (SRT), Zixi and Reliable Internet Stream Transport (RIST), but there are many others.

We solved the challenge of packet loss by using SRT encapsulating Ravenna traffic within SRT.

The final but significant challenge is timing and synchronization. We start by having a separate Precision Time Protocol (PTP) Grandmaster (GM) at each site that is synchronized to GPS. All equipment at each location is locked to PTP locally in order to maintain synchronization among all participating devices. No PTP packets are sent across the WAN or through the cloud, which would simply not be practical as packet jitter is too high to achieve adequate synchronization precision.

The Demo Setup

These musings resulted in an ambitious proof-of-concept demo involving equipment from three Ravenna partners — Ross, Merging Technologies and DirectOut — across four sites over two continents, North America and Europe, that leverages the public cloud infrastructure from Amazon Web Services, or AWS.

The Fig. 1 graphic gives a generalized view of the demo setup. Ross equipment in Ottawa, Canada, interfaced with AWS Virginia, while the Merging and DirectOut setups in Grenoble, France, Lausanne, Switzerland and Mittweida, Germany communicated with AWS Frankfurt.

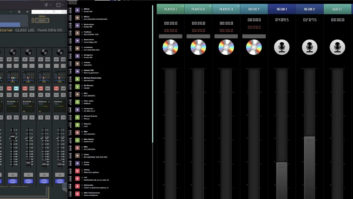

On-site in Ottawa, Mittweida, Lausanne and Grenoble, various Ravenna/AES67 gear from Ross Video, DirectOut and Merging was used to create and receive standard AES67 streams. Gateways on the local networks were used to wrap these AES67 streams into SRT flows, which in turn were handed off to the AWS cloud access points using the public internet.

The flows were then transported within the AWS cloud between the access points, from where they were handed off (secured by SRT) to the local SRT gateways via public internet again. The gateways unwrapped the AES67 streams so that they appeared unchanged in the local destination networks and could be received by the Ravenna devices.

All SRT gateways were built from Haivision’s open-source SRT implementation. While Ross Video and Merging used separate host machines to run the SRT gateways, DirectOut was able to include the gateway functionality into their Prodigy.MP Multi-I/O converter.

Since all Ravenna devices were synchronized to the same time source via GPS, the generated streams received exact RTP timestamps that were transparently transported through the cloud, so that a deterministic and stable playout latency and inter-stream alignment could be configured at the receiving ends. Since streams were not processed or altered in the cloud or by the SRT gateways, the audio data was bit-transparently passed through with full quality.

Since any packet loss was coped with by the SRT protocol, a higher latency setting needed to be configured to accommodate the larger packet delay variation (PDV) due to occasional packet retransmission.

Thankfully, the Ravenna receiver devices used in this demo provided ample buffering capacity to allow adequate configuration. In practice, buffer settings (= overall latency setting) ranged from 200–600 mS, depending on quality and bandwidth of the local Internet connection.

A monitoring web page connected to a local loopback server hosted on AWS enabled listening to the live streams via http within any browser, including display of live VU metering and accumulated (unrecoverable) packet loss per stream.

More information and the live demo page are available on a dedicated page at www.ravenna-network.com/remote-production/.

Lessons Learned

The proof-of-concept demo worked well, and we are very pleased with the results. It required some expertise and fiddling with manual settings to get it to work.

Many lessons were learned from the proof of concept. Here are a few:

- “Local only” PTP synchronization locked to GPS works fine.

- There is packet loss, but this can be managed via SRT.

- Latency, at significantly less than 1 second, is lower than what we expected, but still substantial.

- To manage increased network delay, manual tuning of the link offset at each location was required, as expected, but the deep buffers of the receivers were able to compensate for it.

Future Considerations

There are a few items that require further study to make it a more practical and usable solution:

- A big one is how to transport timing through the cloud.

- We consciously decided on manual connections using session description protocol (SDP) files to keep things simple. It would be valuable to be able to use Ravenna or NMOS registration and discovery over the cloud to automate the connection process.

- Ease of use would be greatly enhanced if the link-offset could be handled automatically to compensate for network delay.

- To manage packet loss, it would be interesting to learn if ST2022-7 redundancy would work.

- Although SRT worked great, it would be good to experiment with RIST to understand if there are any performance or reliability benefits.

The proof of concept showed there is a lot of promise for Ravenna in the cloud and we are excited and motivated to tackle these items soon.

Thanks to Angelo Santos of Ross Video for providing the drawing of the proof of concept setup; Nicolas Sturmel of Merging Technologies for programming the monitoring website and setting up the AWS cloud access; and Claudio Becker-Foss of DirectOut Technologies for providing thoughts on gateway programming.