The author is sales, marketing and PR manager for 2wcom Systems GmbH.

This article appeared in Radio World’s “Trends in Codecs and STLs for 2020” ebook.

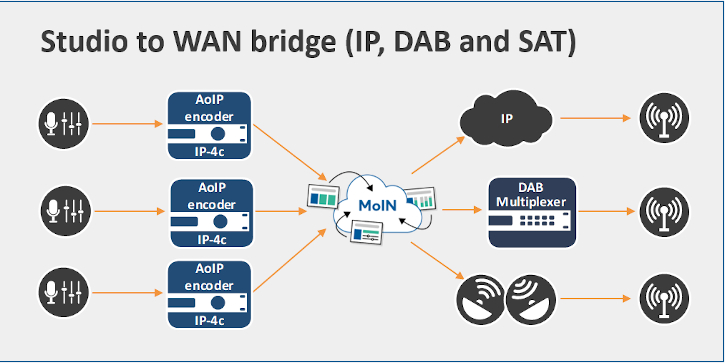

These days most studios run their AoIP networks to produce audio content. In theory, keeping the studio’s contribution separate from distribution offers flexibility at all sites; and separating the audio portion from transmitting sources such as satellite, DAB+ or IP provides various benefits.

Practically speaking, it’s now necessary to change perspective: IP offers broadcasters significantly more flexibility for the transmission of content, so more and more broadcasters and recording studios are deciding to expand IP-based networks.

But broadband and fiber optic are growing at very different rates internationally. So in addition to the use of IP-based structures, flexible alternative distribution paths must be available.

[Check Out More of Radio World’s Ebooks Here]

This leads to the question of how best to link contribution and distribution. The answer is to keep it in segments, because an increasing number of stations are multimedia, streaming audio and video along their facility’s networks.

First Segment: Studio Sites

To connect the studio’s networks with the headend and finally all transmitter sites, we should consider several aspects for our audio setup. The AoIP codec chosen for this should meet at least five key requirements.

First, it must be stable even when operating in WANs. This can be achieved by providing features for transmission robustness like redundant internal or external power supplies; software redundancy, e.g. forward error correction or SRT; and dual streaming or parallel streaming with different audio bitrates. Also, please consider a backup with an alternative source to ensure that your content is transmitted, say, via satellite in case IP lines fail.

Second, the codec must provide all audio formats normally used in a studio, like Enhanced aptX, most ACC profiles or MPEG formats, Opus, Ogg Vorbis, PCM and Dolby. Moreover, compatibility of the different frame sizes of AAC Profiles and Opus is important. Put each manufacturer through its paces to ensure that its products support all possible variants of an audio algorithm.

Third, the codec must support all common protocols as well as standards for internet interoperability such as Livewire+, Ravenna, AES67, EBU Tech 3326 or SMPTE ST2110 full-stack.

Fourth, flexible stream management must be possible in means of channel scalability and MPEG multiplexing facilities. This includes perfect network synchronization by supporting PTPv2 and 1PPS. Especially for audio description of live events, synchronization down to the microsecond is essential.

Fifth, management and control of each audio over IP codec of all studios in a network should be available remotely via PC web interface, supporting SNMP, Ember+, JSON or NMOS. The main system control should be accessible hands-on via local hardware control in case a WAN becomes inaccessible.

As a result of the above, all studios in a static or mobile network can fall back on a unified codec solution while keeping their independence. From a budgetary point of view, a system as described above provides the chance for all studios of a network to rely on existing AoIP setups.

Second Segment: Headend

The demand for the perfect link at the headend implies all of the requirements mentioned above, plus NTP (Network Time Protocol) for network synchronization. Moreover, the solution should just collect the forwarded studio programs to make them available by simply transcoding the streams respectively to the different distribution sources — audio over IP, DAB+ or satellite. This could be achieved by a multimedia-over-IP network server software, flexible, integratable into existing structures in hardware, VMs or as a containerized cloud service.

A system setup that fulfills the requirements described above ensures a “non-locked-in” arrangement. The headend/multiplexing system and studio systems are not hosted on the same network but kept separate from each other; thus, it is possible to replace one or the other if needed without bringing the companion operation down.

An aside about virtualization. We are at the beginning of the use of virtualized products. Be aware that virtualization counts on maintenance. This goes along with the wishes I have often heard from our customers that a broadcast network should be expandable as easily as possible, add new services with a mouse click and mirror the configuration of one device to another.

Scalability can be notably improved by using virtualization strategies. The possibilities that have been introduced by Docker or VMware to copy instances, take snapshots or run them across multiple hardware devices are a great improvement to scale and maintain networks.

That also has a major impact on needed rack space. Thanks to virtualization, applications can share the same hardware or even run as a swarm across multiple hardware units with different hardware configurations.

As a result, the number of devices needed is reduced to a minimum, because server hardware has in most cases a lot more processing power than the specialized hardware of codec manufacturers. Thanks to AES67 and other AoIP standards, the requirements for real hardware interfaces are slowly disappearing, and that is opening the door for virtualized solutions dependent on an all-IP infrastructure. With high bandwidth and robust IP lines, audio processing in the cloud becomes possible. In consequence, manufacturers have to pick up the pace and offer their solutions as virtualized software.

Third Segment — Imagination

With a little imagination such networks have been utilized for a variety of transportation duties. Here’s a beginning list.

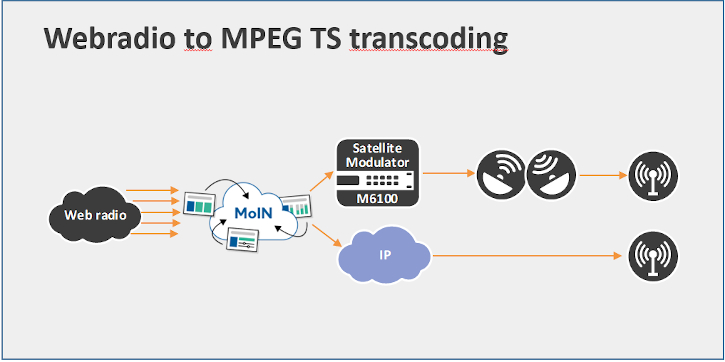

Icecast/HLS to DVB Transport Stream Transcoding: This is used by a number of customers who want to make webstreams available on a DVB transport stream that can be sent in cable networks or via satellite.

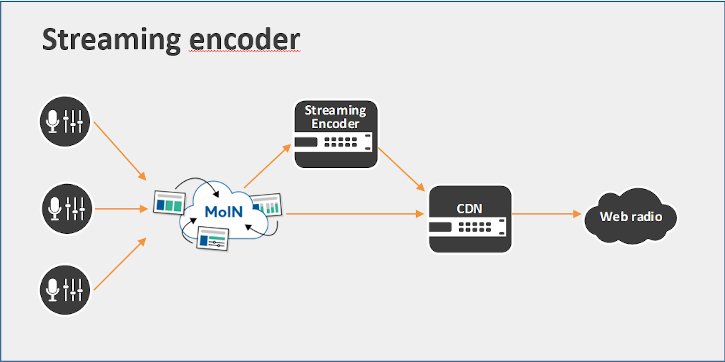

Streaming Encoder: Software can also be used to feed a streaming encoder, for example, the Wowza streaming cloud; or the solution transcodes the audio signals to adaptive bitrate protocols like HLS that can be distributed to the end customer by using a CDN.

AES67 to WAN Bridge: With a great number of supported audio over IP protocols, a “multimedia over IP” network server can transcode signals from studio networks that use AES67, Dante, WheatNet, Ravenna or Livewire+ to a format that is suitable for wide-area networks. For example, the studio signals can be transcoded to Opus for a low-bitrate transmission with SMPTE 2022 conform error protection or using Secure Reliable Transport (SRT). That enables a studio-to-studio bridge that can overcome even stressful network conditions.

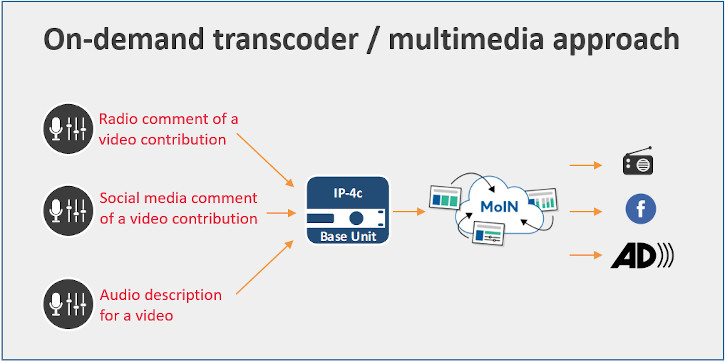

On-Demand Transcoders: The multimedia over IP network server software offers scalable activation of codecs in means of number and time. This allows flexible handling of alternative audio streams such as an audio description of a video, to guarantee accessibility for blind and visually handicapped persons. Or, when a multimedia contribution is produced, operators are enabled to process simultaneous audio commentaries for the video, station website, social media and the radio broadcast.