In the mid-1990s, I was in the middle of a new 50 kW AM construction project in Denver. The 50-acre site was just north of what is now Denver International Airport.

The four towers had just been completed, the ground system was plowed in and the tower crew had departed, leaving just me and the folks helping me with the transmitter and antenna project.

One day, I and a couple of others on the crew were at one of the tower bases installing the antenna tuning unit when we heard the drone of a reciprocating engine overhead — close! We looked up, and there was a little yellow airplane just a couple of hundred feet above the ground about to fly right into the antenna field!

Just north and east of the site, about three miles away, was a little residential airport that had been there for decades. The folks who lived there had hangars adjacent to their houses and could fly in and out, a pretty sweet arrangement if you ask me.

Evidently, the pilot of the little yellow airplane was completely unaware that over the past couple of weeks, four 365-foot towers had gone up nearby, although they were clearly shown on the aeronautical charts.

There was a moment of panic as the plane got closer and closer to the towers. We started to run … but which way? If the plane hit a tower or a guy wire, a tower was sure to come down, and one falling tower would probably cause another to fall … and another, like dominos. And then there would be the wreckage of the plane; where would it go?

So after a few steps, we stopped and just watched, knowing in that moment that running was pointless.

At the last second, the pilot must have spotted the four big red-and-white striped steel structures and turned sharply away, and we all breathed a big sigh of relief. If that pilot’s heart was pounding as hard as mine was, he might have needed a few minutes to calm down before heading into the landing strip.

EXPECTING THE UNEXPECTED

I was reminded of this episode recently as I was considering vulnerabilities and contingencies within our radio station facilities.

We do our best to plan for problems, failures and catastrophes, but it’s hard to know which way to run. Even putting aside, say, the occasional global pandemic, we can’t possibly anticipate every direction from which business threats will come, or how it will impact the operation.

We plan for power failures, and that’s a good place to start. Commercial power will fail on occasion — that’s as sure as death and taxes — and we are wise to plan for it with generators and UPSes to keep our equipment powered during the inevitable outages.

We plan for transmitter problems. The most reliable of transmitters do eventually fail, or they need to go down for maintenance, and in preparation for that, we install auxiliary transmitters and some means to switch between transmitters.

Often, we plan for antenna problems. If it’s not lightning blowing a hole in an antenna bay, it’s ice loading up the antenna and making it (temporarily) unusable. Or we must remove excitation from an antenna while workers are in the vicinity of the antenna during maintenance or repair work. In anticipation of that, many stations have auxiliary antennas fed with auxiliary transmission lines ready to go on a moment’s notice.

But once in a while, something comes out of left field that we did not anticipate, and we find ourselves ill-prepared to deal with it.

We’ve all heard stories and seen news accounts of towers coming down in ice storms or as a result of severe weather. Not many stations can afford a spare tower. Or even if a station does have an off-site auxiliary, what happens when there is an area-wide catastrophe, such a tornado outbreak or an earthquake, that impacts both main and auxiliary sites?

Occasionally we hear about a station that is put off the air because of a fire at the studio or transmitter site. That kind of catastrophe usually results in even backup equipment being damaged, by water or fire retardant if not fire and smoke, leaving the station with no way to get back on the air. How do you plan for that?

Radio station transmitter sites are often a target for thieves. One of my sites was recently burglarized. The alarm alerted the chief engineer and the police, and police were on site before the CE arrived. The thief got away with nothing of significance, but oh … what could have happened! We have on occasion had thieves hack their way through walls at some of our facilities. There is only so much you can do to harden a site against theft. How do you plan for the damage and loss of a break-in?

WHAT’S THAT DRIPPING SOUND?

And then there is the stuff nobody thinks about. Here in my office, the building maintenance people were testing the fire standpipe that goes from ground level to the roof. In the process, they pushed several hundred gallons of water through the pipe and dumped it on the roof all at once, where it should have drained away through a network of roof drains and piping connected to the city storm sewer system.

During the test, however, the volume of water draining through that network was so great that it completely filled the horizontal run of the cast iron drain pipe … and it found a large crack in the top of the pipe, flooding my office! Thankfully my office is at the opposite end of the building from the studio and engineering spaces, but what if …

Or what if a fire sprinkler head failed, spraying water all over servers, switches, computers and other critical equipment? Who thinks about that? Which way do we run?

There is no way we can plan for every possible failure or catastrophe. We might as well accept that. But that doesn’t mean that we should be resigned to being off the air should the worst happen.

At my company, I periodically ask our chief engineers and engineering managers to take a hard look at every part of their facilities searching for vulnerabilities. Is there a piece of equipment that would, should it fail, take one or more stations off the air? What can be done to plug that hole?

Disaster recovery is big business in this day and age, and a lot of folks have gotten on that bandwagon. Our company has contracted with a nationwide provider that will respond quickly with whatever we need — temporary office/studio space, computers, servers, internet/telephone service, generators, fuel. It’s comforting to have that in our hip pocket.

Within the broadcast equipment and digital media realms, we are seeing a lot of disaster recovery pitches being made, most using some form of “cloud” hosting to provide backup or even primary service. This removes the “all eggs in one basket” scenario, spreading out the risk across multiple platforms and facilities. At the same time, it unavoidably adds some vulnerabilities, and that has to be considered as part of the risk/reward equation.

The point of all this is that we, as broadcast engineers, have a responsibility to be prepared for just about anything. It requires critical thought, careful analysis and clear communication to those who make the financial decisions.

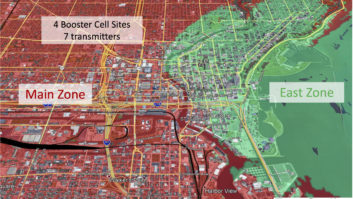

Often, it may mean repurposing or repositioning of certain pieces of equipment. For example, in one market, I had a spare but working transmitter that I chose to reposition to a site some 50 miles away, leaving it disconnected but ready, so that should a wide-area catastrophe occur, I would have a working transmitter on which I could lay my hands and use to get one station back on the air.

It’s all about contingency plans, looking for vulnerabilities, considering the possibilities of what could happen, prioritizing based on what is most likely to happen, and coming up with short-term solutions that can be implemented quickly.

So … which way will you run? How ready are you for the unimaginable?

AND HOW DO WE KNOW WHAT THE “WORST” EVEN IS?

When I wrote the above column, the world was still right-side up. The Coronavirus was overseas news, and while of some concern, it was certainly not affecting the everyday lives of Americans. “The worst” was a tower falling over. A lot has changed since then; “the worst” has a whole new meaning.

Need drives development of technology, which is another way of stating the old adage, “Necessity is the mother of invention.” We have, in the past weeks, run headlong into a high wall of necessity. Thankfully, many broadcast facilities were, to some degree at least, prepared.

Internet connectivity, VNC and other remote operating applications, and studio/transmitter infrastructures that permit remote operation and facility management are at the core of such facilities. Imagine how we might have gotten through a similar lockout crisis just a few years ago before such technology and infrastructure were in place.

Tune through the frequencies in most markets and it sounds like business as usual. The hits keep on playing, familiar voices keep us company and paid programs keep airing. Look behind the curtain, however, and you will likely find that nobody is present at the studios in a lot of cases.

It is during crises that we learn. I think back to 9/11, to Katrina, Irene and countless other regional disasters and remember how broadcasters got the job done. COVID-19 is no hurricane. It is an entirely different kind of storm. But it is teaching us a lot of things, and broadcast engineers are earning their pay as never before.

As we emerge from this crisis – and we will – things are going to be different in a lot of ways. We’ll address that in future editions and in an upcoming ebook. For now, keep giving it your best, and above all else, stay well!

Cris Alexander, CPBE, AMD, DRB, is director of engineering of Crawford Broadcasting Co. and technical editor of RW Engineering Extra. Email him at [email protected].