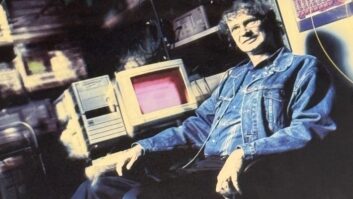

Frank Foti is executive chairman of The Telos Alliance and founder of Omnia Audio. We spoke with him for the recent Radio World ebook about audio processing?

Frank Foti is executive chairman of The Telos Alliance and founder of Omnia Audio. We spoke with him for the recent Radio World ebook about audio processing?

Radio World: Frank, what would you say is the most important recent or pending development in the design or use of processors?

Frank Foti: The recipe for audio processing is never finished.

Aside from ongoing development to subjectively improve sonic performance, the function of processing has crossed over into the virtual realm. This concept was first fostered by Steve Church, and myself back about 1994, as our early efforts began on Livewire, our audio over IP platform then under development.

Today, we have the tools to provide processing in the software-as-a-service (SaaS) format, as well as a container. Yet we also know that there are those in the marketplace whose comfort level remains having their processing running in a dedicated appliance. Our work will always support that platform as well.

RW: What should we know about differences in processing needs for analog over the air, digital OTA, podcasts and streaming?

Foti: Telos Systems was first to introduce data-reduced audio more than 25 years ago. Steve Church and I were also the first to recognize the need of dedicated processing for conventional broadcasting, and audio streams.

In reality, digital OTA, podcasts and streaming are all basically one form or another of the data-reduced technology. Thus, all conventional analog OTA transmissions for FM or AM need to employ a processor for that function, and digital OTA, podcasts, streaming, need to use processing designed for data reduced audio.

The main difference between conventional and data-reduced audio transmissions is the final limiter function. Suffice it to say, a processor designed for one system will not “play” well with the other type of system.

[Read: WorldCast Products Reflect New Service Models]

RW: How will cloud, virtualization and SaaS affect our processing marketplace?

Foti: It already has! The pandemic of 2020 escalated efforts that were already in place regarding this topic. If anything, now we’ll observe refinements to what’s already in place.

The concepts of the cloud and virtualization present flexibility to the broadcaster that was never possible before. Processing can be installed, adjusted, modified as a system, moved, updated and a host of other utilities from basically anywhere in the world. We even have the ability to transport monitor audio back to remote locations that might be outside of the listening coverage.

RW: Six years ago we had an ebook where we wondered how processors could advance much more, given how powerful their hardware and algorithms were. What about today?

Foti: This question gets asked fairly often. The Achilles Heel of broadcast audio processing has always been the final limiting system. As much as we’d all love a free lunch, it does not apply here, and there is a breaking point.

I’m constantly evaluating our own efforts, as well as those from others. Using choice content, which is challenging for any algorithm, it is easy to discern a good limiter design from another. Sadly, there are some current designs that leave a lot to be desired in this area.

Recent ongoing development from my own workstation has produced a new final limiting system that further reduces and in some cases eliminates sonic annoyances. Those being harmonic and intermodulation distortion components that are audible.

RW: Has radio reached a point of “hypercompression,” with little further change in how loud we can make over-the-air audio? How do we break out of that plateau?

Foti: Loudness is really only a problem if it’s accomplished in an annoying fashion. That’s not being said to promote loudness. It is possible to create a “standout” loud on-air signal that is not annoying.

It comes down to the processor involved, as well as who sets it up. The term “hypercompression” can be defined differently based on interpretation.

I know there are some who absolutely love the sound of “deep compression” and the effect the added intermod it creates, whereas there are others who use less dynamic compression and rely on the final limiting system for their end result. Both are capable of generating large levels of RMS modulation, yet result in dramatically different effected signatures.

Is one better than the other? It’s all very subjective, as well as what is truly to be defined as hypercompression.

RW: As John Kean told us in another article, AES loudness metrics are moving to a lower target level for content, streams, podcasts and on-demand file transfer, like existing metrics for online and over-the-top video. If radio stays with its current environment — modulation limiting, reception noise, loudness wars — could radio see loss of audience due to listener fatigue?

Foti: Any broadcast facility that has lost audience due to listener fatigue needs to realize this occurred due to their approach to audio processing.

Loudness is not the issue. It’s how one achieves a loud signature that determines the listenability of a signal. There is a difference between the perception of a good clean loud signal, and another which sounds like your head is squashed within the jaws of a vice. Both are loud, but both are not bad.

It really comes down to choices made by the broadcaster. Analogy: A car that goes fast is not necessarily a reckless auto. It comes down on the driver of the car. Same applies here.

RW: We read that processing can mitigate multipath distortion and reduce clipping distortion in content. How can users evaluate such claims?

Foti: Great question! I’ve done significant work in this area, and have recently created a method to test, and observe the effects of induced multipath, based on audio processing. Surely, it could be further developed, as a tool for broadcasters.

As of this writing, there is nothing on the market, but there are technical papers that address it. Suffice it to say, I’d be very weary of those who make ad hoc statements about multipath, exaggerated by processing, that were done without any technical evidence or test criteria or employed good engineering practice.

RW: Nautel and Telos recently did a joint demo aimed at eliminating alignment issues by locking the FM and HD1 outputs from the processor through the HD air chain to the transmitter. What’s your take?

Foti: Having been in some of the discussions about this method, this is a solid design that negates outside/ancillary devices to monitor and adjust the time alignment. This is the first systemic approach, which further solidifies the digital transmission infrastructure. It’s very straightforward in design, and reduces the level of complexity within the digital transmission system.

We need to remember that as HD Radio evolved and refined itself, the overall system and infrastructure has had to change. Now that the tech has become mature, it’s possible to create a method that efficiently and reliably creates the broadcast signals for conventional and digital transmission.