LONDON — A new approach for creating and deploying interactive, personalized, scalable and immersive content is being researched in a European project known as “Orpheus.”

The audio control interface developed to produce “The Mermaid’s Tears.”

Orpheus involves 10 major broadcasters, manufacturers and research institutions from Germany, France, the United Kingdom, and the Netherlands, including Fraunhofer IIS, Magix, BBC Research & Development and Bayerischer Rundfunk.

The Orpheus team of engineers and various partners aims to create a system wherein individual elements within an audio environment can be manipulated by the listener rather than predetermined by a mixer before transmission. For example, a listener to a sporting event could change the levels between announcers and background music or switch between different channels of crowd noise. The listener becomes the mixer in this approach to audio.

STUDIO EVOLUTION

Chris Baume is senior research engineer at BBC R&D.

“Orpheus brings together spatially immersive audio, interactive and personalized experiences, and accessibility of content,” explains Chris Baume, senior research engineer at BBC R&D, which recently hosted the first project workshop at New Broadcasting House in London.

“For a number of years, BBC R&D has been doing experiments in how to put this together, and what sort of new experiences we can create. The earliest one was the BBC Radio 5 live football experiment, where we broadcast separate streams for crowd noise and commentary in a stadium. You could choose which side of the stadium you were on — whether you were with the home or away team,” he said.

BBC R&D’s Chris Baume works in the Orpheus studio.

“The Orpheus project came about because these were one-off prototypes using different technology, protocols and production techniques. We wanted to think longer-term about how we could do this as a more regular thing, by making it what we call ‘end-to-end;’ creating programs to be object-based from the very start; designing the tools and protocols to be able to stream that; record it as files; put it over the internet; and receive it at home — the whole broadcast chain.”

Baume says he expects an evolution in studios, rather than revolution. “There’ll be elements of it that start to appear when studios are getting refurbished. The move to IP-based systems for routing audio signals gives us a lot of flexibility, as does the push into software. We’re not having to buy specialist hardware boxes that do just one thing, we can create it as a software package and update it continuously. It won’t be scrapping studios, it will be a step-by-step change,” he said.

The BBC’s Dave Walters speaks at the Orpheus project workshop in London.

Baume explains they realize radio production work is quite optimized, because producers and engineers are already under such pressure of time and costs, and that it’s not particularly easy to improve that workflow.

“For instance, a lot of producers use notebooks to put together scripts, or Word documents for putting together running orders. It’s all completely unstructured and gets saved in random places; it gets lost and it doesn’t come together into the program. So one thing we can do is develop tools they can use instead which do feed into the broadcast chain, and which you can then use to segment up the program for reversioning or to provide additional information to the user.”

IMPROVING LISTENER EXPERIENCE

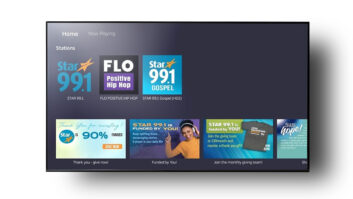

The Orpheus project’s dedicated all-IP studio at BBC Broadcasting House in London.

The project aims to improve the listener experience with enhancements such as personalized audio. “One experiment we’ve done” says Baume, “is we can apply compression at the listener end, and adapt it dynamically depending on the background noise. So if it’s a very quiet room, we wouldn’t apply any dynamic range compression, but if you’re in a car and you can’t hear what’s going on, it will automatically know that and applies an appropriate level.”

One of the programs produced as part of the Orpheus effort is “The Mermaid’s Tears,” an interactive radio drama allowing listeners to choose one of three characters to follow.

Listeners can choose the character to follow in “The Mermaid’s Tears.”

“We produced this using object-based audio,” said Baume, “so we’re creating the program once, but defining three different experiences from that. We produced it live — we had the actors going to different rooms at different times using different microphones, but for example instead of creating three stereo mixes, we sent all the audio objects to the listener over the internet, and then created different sets of instructions to mix those together for the characters.”

Baume says this allowed them to add another level to audio experiences by handing over a limited level of control to the user. “But it’s still under the control of the producer, who defines what they want to hand over.”

Baume points out that the most in-demand set of features is accessibility. “One of the major complaints we get is that people can’t hear dialogue over background music or noise. So one very simple thing we can do with object-based audio is to transmit the dialogue separately to the background track, and either let the users control that level manually, or do that automatically.”

The next steps for the BBC’s R&D team include producing a further pilot program, likely to be a non-linear experience with “variable depth,” allowing users to control the level of detail they go into within the content.

By the end of the project, funded by the European Commission’s Horizon 2020 program, the Orpheus team aims to publish reference architecture and guidelines on how to implement object-based audio chains.

Will Jackson reports on the industry for Radio World from London.