Dominic Giambo is manager of technology at Wheatstone, responsible for its WheatNet-IP audio network and routing applications. He has been involved in industry AES67 plugfests as the lead engineer responsible for AES67 implementation in the Wheatstone AoIP network. He’ll speak on Sunday of the NAB Show in the session “Onsite or Cloud: Strategies for Mixing It Up.”

Radio World: What is the theme of your talk?

Dominic Giambo: There are some very practical ways to step into a more cloud-like operation without going all in and having to entrust your entire broadcast chain to a public cloud provider.

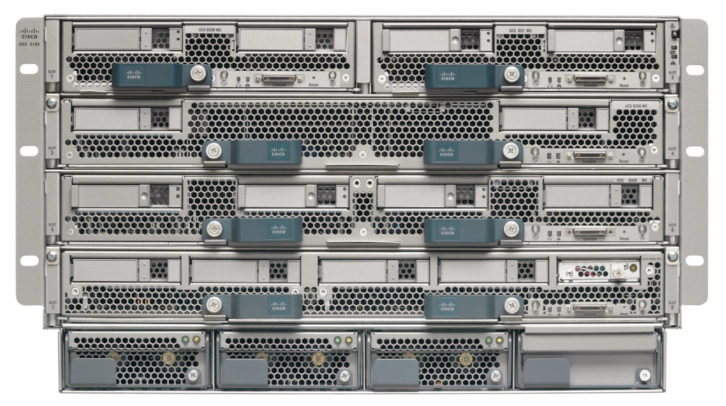

For example, commodity hardware like servers continue to get faster and less expensive, and we can use these to run program instances of applications in place of discrete hardware. The benefits can be significant, from not having to maintain specialized hardware and all the costs associated with that — like electrical, AC and space — plus there’s a certain adaptability with software that you just can’t get with hardware alone.

The next step might be to go to a container model with the use of Docker or similar containerization platform. Containerization doesn’t have the large overhead that you find with virtualization per se, because you can run a number of different containers that share the same operating system. For example, one container could host WheatNet-IP audio processing tools, while another could host the station automation system, each totally isolated yet run off the same OS kernel.

Should you decide to move onto a public cloud provider like Amazon or Microsoft, these containers can then be moved to that platform. Containers work well on just about all the cloud providers and instance types. Most providers even offer tools to make it easy to manage and coordinate your containers running on their cloud.

Software as a Service applications that consolidate functions and don’t have critical live broadcast timing requirements are replacing rows of desktop computers and racks of processing boxes.

Streaming and processing are good examples. Migrating these to a cloud environment is straightforward if you already have them running on your servers. The hard work is already done, plus you’ll still have the servers for redundancy or testing and trying new ideas.

[For More News on the NAB Show See Our NAB Show News Page]

RW: Please expand why this is important.

Giambo: If you have a server, for example, you already have the beginnings of virtualization in the sense that you can offload some of the functions performed on hardware with instances of software.

There is really no need to invest in a big architecture migration plan. Just about every modern station has a server or two in their rack room that has some room to run a virtual mixer or instances of audio processing to the transmitter site or out to a stream. The work is simply moved onto a server CPU instead of having that work performed on a dedicated hardware unit, and as commodity hardware, servers tend to get more powerful quicker than a dedicated piece of hardware that might be updated less frequently.

RW: What pieces of the broadcast airchain are moving to the cloud?

Giambo: Playout systems are moving to the cloud, and that makes a good case for the mix engine to also be in the cloud.

Latency is always going to be an issue with programming going into and out of a server (or cloud) if it’s far away, but there are effective ways to deal with this. For example, I can see how a local talk show might still be mixed locally in order to preserve that low latency needed for mic feeds into that mix, but it might be mixed using client software that is based in the cloud or regional server for general distribution.

All kinds of processing could be done in the cloud, as well as encoding/streaming tasks for content delivery.

RW: What are the main arguments for doing so?

Giambo: Cloud, and software generally, buys you greater flexibility for adding on studios, sharing resources between regional locations, and even for supply chain interruptions that might occur with hardware only. We can use commodity hardware to do what we might have done with specialized hardware in the past, and that is only going to get more flexible and affordable as time goes on.

RW: We saw headlines in late 2021 when Amazon Web Services had several technical failures. What lesson should broadcasters take from that in planning their infrastructures?

Giambo: That is something that must be considered in any cloud deployment, for anyone using the cloud. Clearly these platforms are in the bad guys’ crosshairs. There are many points of failure. Some industries can be more tolerant of interruption, but live broadcast is not one of them.

We need to take this in steps. We may be able to get many of the benefits of cloud by moving towards a software-based approach first, such as running this software on servers with ever increasing power and then distributing the control of that equipment remotely using virtual consoles. In a later step these same servers could be re-tasked to use in redundant backup scenarios alongside cloud resources to mitigate the security risks of a cloud-based approach.

Yes, cloud services purport redundancy, but as we’ve seen, it doesn’t always work that way.

RW: What else should we know?

Giambo: For most broadcasters, virtualization or cloud isn’t the goal any more than adding a new codec or AoIP system is the goal. The great thing about software virtualization is that it uses enterprise commodities, which will inevitably find their way into studios and rack rooms. In that sense, the planning has already been done, so now it’s just a matter of taking advantage of what you can do with what you have already.